Internal Contradictions and the Displacement of Learner-Centred Programming

A close reading of the LBS logic model reveals internal contradictions about who the system is designed to support. It also reveals statements that displace the traditional philosophical approach of LBS: learner-centred programming.

First, the contradiction. One of the main findings from the LBS evaluation—a lack of vision and inconsistent messaging about who the program is intended to support—can be directly linked to an internal contradiction in the logic model. The stated purpose of the LBS system is

to help adults in Ontario […] including learners who may have a range of barriers to learning.

This appears to be an inclusive and equitable statement. But it doesn’t stand alone. In the target statements that follow, the OECD’s international testing levels (used in the IALS, IALSS and PIAAC) and the OALCF levels are used to define who can access LBS:

Adult learners, Ontario residents, who have their literacy and basic skills assessed at intake as less than the end of Level 3 of the International Adult Literacy and Skills Survey (IALSS) or the Ontario Adult Literacy Curriculum Framework (OALCF)…

Several problems arise as a result of the target statements:

- They don’t connect directly to the purpose statement and help one identify “a range of barriers to learning” and who may have these. Adult learners are those Ontarians who achieve a certain level of proficiency. This also suggests that the assessments are entry requirements.

- The two Level 3 standards are different. Level 3 on the IALSS is not the same as Level 3 on the OALCF. Having two distinct measures, neither of which support the purpose, is inconsistent and contradictoty.

- In addition, IALSS levels are mostly irrelevant for the LBS system. Level 3 is irrelevant since test-takers at the beginning of Level 3 have completed college; and those in the middle of Level 3 have completed university. The LBS system does not provide support for those in the postsecondary system (with the exception of preparatory programs). In addition, the IALSS scale can’t be used for those with abilities aligned with an elementary education level. Tests-takers don’t place on the scale unless they have completed 8-10 years of formal education.

- The OALCF scale operates differently than the IALSS. Although it shares some common elements with international testing methods, it doesn’t share enough of these to produce similar testing results.

- The OALCF scale also requires LBS participants to have some literacy knowledge to be placed at an Level 1, potentially blocking their participation.

While the purpose statement appears to be somewhat equitable and inclusive, directing programs and policy staff to support those with “learning barriers” the accompanying target statements undermine and confuse the purpose. They can also be used to create additional learning barriers, exacerbating existing inequalities.

Of course policy staff and program staff are confused, wondering who is eligible to participate and what is the main intent of participation in LBS. Is the main aim of the program to demonstrate a level improvement or to mediate “learning barriers?” If one can’t complete an IALSS spin-off test or OALCF assessment are they ineligible?

The chosen assessment methods and the decision to use an assessment as a condition for participation are barriers to inclusive and equitable participation. This is one example of inequality by design, one that prevents equitable and inclusive access to LBS for those Ontarians who have no other supported learning opportunities. The targets also suggest that those who aren’t able to draw on their skills to pass a test cannot access the program.

From Learner-centred to Employment-centred

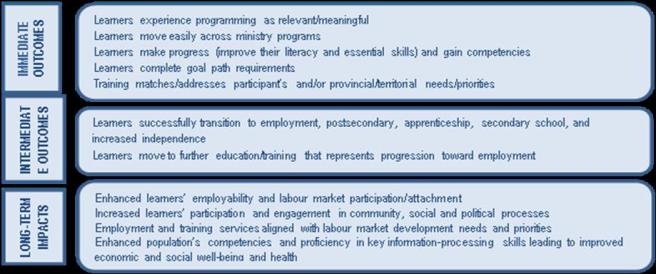

The logic model provides a rationale and justification for pressuring learners to pursue employment goals over personal goals. It also provides a rationale for valuing and encouraging short-term employment outcomes over the achievement of education related aims and associated credentials. One of the stated immediate outcomes in the logic model is the following:

Training matches/addresses participants’ and/or provincial/territorial needs/priorities.

Here we see the tradition of learner-centred programming compromised, particularly if the learner’s reasons for participating in a program don’t align with provincial “needs/priorities.” The ministry gives itself the right to persuade and pressure adults (and the educators who work with them) to learn what they deem a priority.

The ministry’s right to control training—that is what is learned and how it is learned—is asserted again in a long-term impact statement:

Employment and training services [are] aligned with labour market development needs and priorities.

This time, the learner is completely absent from the statement. Learner-centred programming is turned into employment-centred programming. The logic model provides a justification for policy staff to override and disregard a participant’s personal aims, goals and concerns.

How this plays out in local programs will of course vary. Some may indeed “turn away” from supporting personal learning aspirations, while others strive to maintain learner-centred programming. But to do so, they have to spend time and effort working against the LBS system and for learners. This is a second example of an inequality by design, one that doesn’t respect the right of individuals to decide what kinds of literacy learning would be most useful in their lives, for their families and communities.

Further advancing the employment-centred focus is another intermediate outcome statement that qualifies the value of educational goals. As mentioned, the first immediate outcome is that

Learners successfully transition to employment, postsecondary, apprenticeship, secondary school, and increased independence.

The second intermediate outcome statement is a qualifier (my emphasis):

Learners move to further education/training that represents progression toward employment.

It’s possible that the intent of the support statement is to include educational activities that are left unnamed in the goal paths, such as completing a GED test or entering a vocational training program. At the same time (particularly considering the employment-centred focus) the statement undermines the value of education as a goal that can be pursued for its own inherent value and for a credential. Education is valued only if it leads to a job. The credential, of great value to the learner (and employers and other educational institutions) is not mentioned.

We can see the devaluation of education play out in exit and follow-up reporting that require extensive tracking of employment and wages and no tracking of learners based on their education achievements. What happens to LBS participants once they complete an ACE program (postsecondary path) or achieve PLAR credits for mature students (secondary path) or pass the GED? What opportunities can they now pursue? What barriers have been mitigated?

A related problem is the exclusion of the GED as a named goal. Many remote programs rely on the GED as a means to support Indigenous learners. Not naming the GED delegitimizes it and the students who pursue it. We don’t even know how the goal is categorized by programs and are unable to track it.

Here are two additional examples of inequality by design: 1) the overall devaluation of educational achievements, even though the majority in programs have these goals, and 2) the exclusion of the GED as a named goal for many Indigenous learners.

A Hierarchy of Outcomes and Impact Statements

It is the final section of the LBS logic model, the long-term impacts, that is the most powerful and crucial to understand. Measures of long-term impacts are most important to develop in order to demonstrate overall system effectiveness. While immediate outcomes are somewhat useful, and the ones that were examined by LBS evaluators, it is the long-term impacts that run the system.

In the next post, I will go beyond the text in the logic model to examine the assumptions and measurement methods carried into it in order to demonstrate the long-term impacts.

Hi Christine,

Thanks for the reply.

I agree that a clearer articulation of the LBS program’s target is very much needed.

What part of the logic model do think is causing LBS programs to avoid learners/clients unlikely to pass one OALCF Milestone (by March 31 each year)?

Would the problem be solved if the Logic Model’s Target was changed to:

Adult Learner, Ontario residents, who are at least 19 years old; who are proficient enough in speaking and listening to benefit from the language of LBS instruction (English or French)….

Though not referencing the OALCF, we would probably still get hit by the Outputs of “Consistent learning progress measures and standards across learners and service providers”. As well as the Immediate Outcomes of “Learners make progress”.

Do you think the model used to gauge literacy development for the purposes of describing the intended audience or target for a program (we serve ABC) and the model used to detect literacy development for the purposes of demonstrating a programs impact must be the same model?

I very much look forward to reading the next posts.

Alan

LikeLike

Great questions and thoughts. The specific part of the target statement that is of concern is “who have their literacy and basic skills assessed at intake at less than the end of Level 3 of the International Adult Literacy and Skills Survey (IALSS) or the Ontario Adult Literacy Curriculum Framework (OALCF)…” (my emphasis). This indicates that adults must take an entry test to be eligible for the program and not simply be able to complete an OALCF Milestone by March 31.

Logic model designers may not have realized that people don’t score at Level 1 on the IALSS unless they have about 8-10 years of education, since their main concern was demonstrating the mythical Level 3 standard. Even though the OALCF is an interpretation of this scale, and not aligned, it too is inaccessible to those with only an elementary level of education or less. Yes, there are many Milestones that aren’t levelled or don’t follow the underpinning framework, like digital technology or verbal communication Milestones. But some may refer to a reading-related Milestone as a more accurate indicator of eligibility, since this is a literacy development program, and quickly recognize that many learners can’t be placed on the OALCF scale based on their reading.

I just ran Milestone A1.1 Read a classified ad through a readability analysis. The average grade level is 6.2. In addition, a test-taker would need to be familiar with test-taking conventions and have enough experience with texts at this grade level when reading something out of context and unfamiliar, likely bumping up the difficulty. This is a big concern for many programs and it’s simply unfair and inequitable.

I agree with your interpretation of the way some of the output statements (i.e. competency-based programming, consistency in competencies and assessment across the province, consistent progress measures) some immediate outcomes (i.e. learners make progress, complete goal path requirements) are tied-up with the OALCF curriculum framework and the tests used to indicate those levels.

Integrating any measurement method into a logic model is a big problem, as it creates an inflexible and very narrow view of program activity. The integration of IALSS/OALCF has exacerbated the issue since the two measurement methods are not aligned (causing confusion and preventing the development of a coherent and valid approach to assessment), and both can be used to prevent equitable access to programs, particularly for those with 0-8 years of education. Even if people in programs decide to simply ignore the IALSS/OALCF target statements and not rely on an intake assessment for program entry, they still have to justify their good decisions and may be pressured to do otherwise by ETCs. To be equitable and provide meaningful and responsive programming, programs and ETCs have to game the system and work against the logic model and accompanying assessment system that they are mandated to use.

LikeLike

Hi Christine, I think your interpretation of the “Purpose” in the logic model may be a little too narrow.

“To help adults develop and apply…skills to transition to…, including learner who may have a range of barriers to learning.” Transition (for better or worse) is the key element, not exclusively and only those who have a range of barriers.

I know there were discussions about NEW intake screening tests that would render many “failing” learners ineligible for LBS training. Are you currently aware of Ontario adult learners blocked from participation in an LBS program because they could not take or score on an intake assessment? I always understood the level 3 as intended as only an exclusionary upper limit. There are in fact a good number learners in the LBS program with a post secondary education.

I have always struggled with the meaning of learner centre and I question if there ever was a traditional or more genuine implementation. It was explained to me (by a now retired LBS administrator) as meaning that the program was focussed on serving learners matching set of suitability criteria. Not some much programming centre on the needs of an individual learner, but rather targeted the program on a specific learner audience – that is how it is reflected in the performance management framework.

Re the alignment of the program to this or that, I take a slightly more generous interpretation. I think the intention of the alignment statements are to address some programming and training delivery that was traditionally aligned more with the needs of the local programs or instructors. MAESD maybe ignoring them, but I don’t think it is accurate to fault the logic model and ignore the presence of the 4 other stated immediate outcome.

Thank for advancing the debate and discussion.

Alan

LikeLike

Thanks Alan for the careful read and comments. I agree, I did focus on the barriers concept and not transition when looking at the purpose. I was thinking about one of the findings in the LBS evaluation which described the way the vision for LBS and who it is supposed to serve seems blurred and unclear. Now I would add that both transition and barriers are subsumed by the target statements. A better articulation of transitions and barriers in the logic model and accompanying frameworks and measures would be a way forward.

With regard to the question about blocking learners, one of the findings in the evaluation report was that programs were “creaming” and working with those who could readily complete Milestones. Programs may be referring more challenging learners to other programs or keeping them off the books and working with them unofficially. These activities have been documented. My main argument is that the international literacy testing levels are simply an inappropriate model, even with adaptations, to gauge and detect literacy development. They are particularly inappropriate for adults with an elementary equivalent education or less. Even PIAAC has a new category: “below Level 1”. This is not a useful nor supportive hierarchy for a learning program.

You raise a very important question about the many meanings attached to learner-centred programming. I definitely don’t advocate a return to the previous interpretation, in which learner-centred became tied up in five LBS levels and skill achievement indicators. It’s interesting to learn that the term was interpreted via the suitability indicators, devised by the ministry to prioritize their concerns and interests. I support a vision of learner-centredness, in which the individual’s articulation of their interests, aims, pursuits, and goals remains intact and isn’t reformulated to fit into a sets of skills or tasks or competencies or suitability criteria.

I’m glad you raised a key point about the category called immediate outcomes. These were the ones that LBS evaluators examined, concluding that programs are doing a great job, despite the many identified challenges. The five immediate outcomes along with the first intermediate outcome statement weren’t the target of critique. These are aspects of the logic model that are general enough and flexible enough to serve as decent guides. This could have been stated more explicitly.

My main concerns are the use of international testing levels and related measures, the absence of stakeholder expertise, the displacement of learners’ personal goals, undervaluing of educational outcomes, primacy of employability, and the authority the ministry has given itself to implement an unfair and unvaliadted high-stakes assessment framework that drives programming and pedagogy.

LikeLike